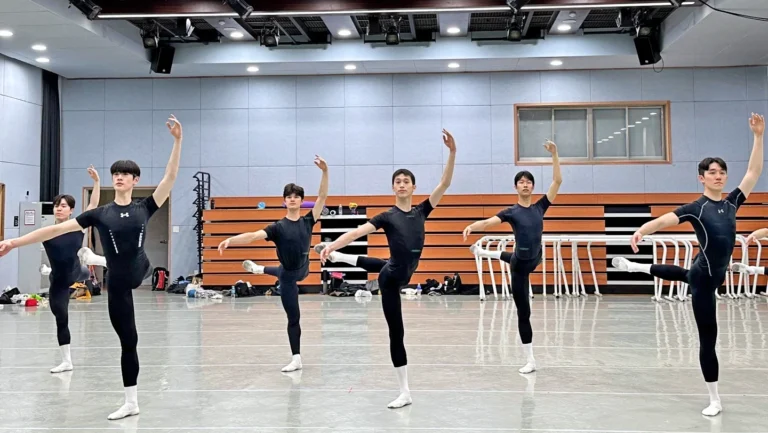

Dance is a fairly analog art form. We typically rely on being in the same space together to learn or perform, and usually pass down knowledge directly from one body to another. So it’s somewhat surprising that a new four-year research project at New York University’s Tandon School of Engineering, funded by a $1.2 million grant from the National Science Foundation, is using dance to study the possibilities of point-cloud video, a 3D immersive video technology.

“Dancers provide all of the challenges—space, time, color, contrast, big, small, intricate pathways—that the researchers want to solve to create a predictive model that then can be used in numerous settings, like teaching surgeons,” says Sarah Marcus, director of education and community engagement at Mark Morris Dance Group, which will partner on the project along with NYU’s Tisch dance department. At the same time, this tech also offers new opportunities for dance education: “You can look at the specificity of movement, healthy alignment, proper execution from every angle,” Marcus says.

Dance Teacher spoke with the co-principal investigator of the project, R. Luke DuBois, associate professor in the Technology, Culture, and Society Department at NYU Tandon, about this research and its potential implications for dance education.

Tell me how point-cloud video technology works.

Did you ever see The Matrix? They would surround Keanu Reeves with a bunch of still photo cameras, and they would all go off, boom, boom, boom. Now you can do it with video cameras with depth sensors and end up with footage that can be viewed at all angles and manipulated in a 3D environment.

So how are you using that tech in this project with Tisch and Mark Morris?

I have two colleagues, Yong Liu and Yao Wang, in our Electrical and Computer Engineering Department, who do research around video compression for point-cloud video. Right now, point-cloud video is kind of a pain in the butt to send around. You need a big internet connection. The hypothesis that these two folks have is that you could optimize what you’re sending based on what the viewer wants to see. Say I’m looking at a 3D model of a dancer and I really only care about their shoulder—the system should only send me the data for their shoulder.

Why did you want to work with dancers in particular for this?

Because dance involves bodies in motion. And it’s currently technologically a little…it’s not commonly future-forward, right? And the theory was to partner with Mark Morris and Tisch to see what it would be like to really rev this thing up where a dance student could put on a VR headset and see 3D models of the dances they’re supposed to learn. They can zoom in, turn, pivot, get in there, and really see what’s going on.

Will the dancers involved have access to the results?

Yeah. To start, we’re going to capture Mark Morris instructors doing stuff they teach all the time. Then it’s going to live in a setup at Mark Morris studios and we’re going to study how people work with that footage. The students can put on the VR goggles, and there’s like a video game controller, and you can boogie around in the footage, move perspectives, and we will be asking, Did it feel smooth? Was this actually helpful? Did you feel like you needed to move your head around more than you should need to?

We’re also going to make an open-source online repository so that other researchers will also be able to use this footage.

What excites you about these kinds of collaborations?

By doing this work with people who might not have exposure to research-grade computing tools, this gets people inspired to say, “Hey, I saw this really cool thing in my Tuesday morning dance class. Maybe I want to learn how it was made.” Next thing you know, you’ve got a computer scientist.

Why should dancers get into computer science?

We need people with as broad of experience as possible engaging in computers. I have a fantastic alumna, Caitlin Sikora, who did her MFA at Tisch dance and then did a master’s with us, and she’s now a machine learning researcher at Google. She looks at the ways gesture and the body get analyzed in AI systems. These days, the computer is increasingly looking at my body and analyzing how I’m moving to do stuff [like snoozing an alarm when showing a particular gesture]. People who studied dance are optimized to participate in that discussion.

Click here to view a video that is part of a holiday card that NYU Tandon School of Engineering made by capturing two Mark Morris Dance Group members performing a snippet from Morris’ The Hard Nut on point-could video. If you have an augmented reality headset, you can also view this in 3D.

The behind the scenes clip below shows two Mark Morris Dance Group members performing a phrase from Morris’ The Hard Nut for point-cloud video cameras to capture 3D files of their movement.